Building Enterprise AI on Google Cloud TPUs Using JAX

November 19, 2025

Rakesh Iyer

Senior Software Engineering Manager, Google ML Frameworks

Srikanth Kilaru

Senior Product Manager, Google ML Frameworks

JAX has become a vital framework for developing advanced foundation models in AI, widely adopted beyond Google by leading companies like Anthropic, xAI, and Apple. It serves as the core for building scalable, high-performance AI systems, especially on Google Cloud TPUs. This article provides an overview of the JAX AI Stack—a comprehensive, modular platform designed for industrial-scale machine learning.

The core idea behind the platform emphasizes flexibility and speed. Built on loosely connected, specialized libraries, the JAX AI Stack allows users to tailor their machine learning environment by selecting the best tools for tasks such as optimization, data loading, or checkpointing. This modularity encourages rapid innovation, enabling easy integration of new techniques without overhauling existing systems.

Modern AI development requires a balance between abstraction and detailed control. The JAX AI Stack offers this by supporting high-level automations for quick development and low-level customization for performance-critical tasks.

At the system’s core is the “JAX AI Stack,” comprising four primary libraries built on JAX and XLA’s compiler technology:

– JAX: The fundamental array computation library optimized for accelerators. Its functional programming approach facilitates effective scaling across hardware and clusters.

– Flax: An object-oriented interface suited for neural network development, providing flexibility while maintaining JAX’s high performance.

– Optax: A library of composable gradient transformations and optimizers, enabling complex training routines with minimal code.

– Orbax: A resilient, scalable checkpointing system that supports asynchronous distributed snapshots, ensuring training continuity even during hardware failures, suitable for multi-thousand node setups.

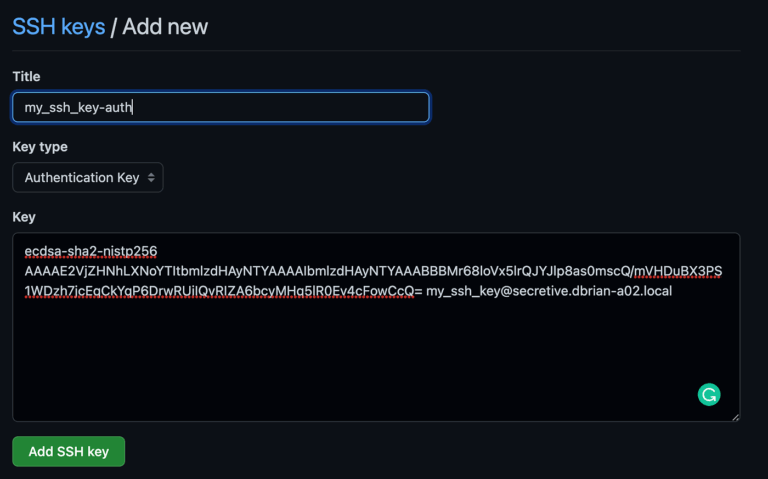

These core libraries are accessible via a simple installation command (`pip install jax-ai-stack`).

Beyond the core, the ecosystem includes numerous specialized tools that support all stages of machine learning, from data management to deployment. Underpinning this framework is infrastructure that seamlessly scales from single TPUs or GPUs to thousands, leveraging Google’s extensive computational resources.

In summary, the JAX AI Stack is a powerful, modular solution for building large-scale AI models on Google Cloud infrastructure. It combines high performance, flexibility, and resilience, enabling organizations to develop and deploy advanced AI solutions efficiently.

FAQs

Q: What is JAX and why is it important for enterprise AI?

A: JAX is a high-performance numerical computing library that supports scalable array operations and automatic differentiation, essential for developing advanced AI models efficiently at scale.

Q: How does the JAX AI Stack enhance AI development on Google Cloud?

A: It provides a modular ecosystem of libraries optimized for TPUs, supporting everything from model creation to resilient training, enabling scalable and flexible AI solutions.

Q: Can the JAX AI Stack be customized for specific AI projects?

A: Yes, its modular design allows developers to choose and configure components to suit their needs, making it ideal for diverse and complex AI workflows.

Leave a Comment