AI Build Systems Under Siege: How the PromptPwnd Vulnerability Threatens Modern Development Environments

The technology landscape has entered a new era of AI-driven development, where automation and machine learning are at the forefront of software engineering. However, this evolution comes with significant risks, as demonstrated by the recently discovered PromptPwnd vulnerability. This critical flaw in AI-powered build systems could expose sensitive corporate and personal data to theft, raising serious concerns about the security of modern development pipelines.

The Rise of AI in Build Systems

AI has become an integral part of AI-driven build systems, streamlining processes such as code compilation, testing, and deployment. These systems leverage machine learning to optimize workflows, detect errors, and even predict potential issues before they arise. However, the integration of AI introduces new attack vectors that cybercriminals can exploit.

According to recent studies, over 70% of enterprises now use AI-powered development tools, a number that is expected to rise as automation becomes more sophisticated. This widespread adoption makes the PromptPwnd vulnerability particularly concerning, as it could impact a broad range of organizations.

Understanding the PromptPwnd Vulnerability

The PromptPwnd vulnerability is a critical flaw that allows attackers to manipulate AI models within build systems. By injecting malicious inputs, cybercriminals can trick these systems into revealing sensitive data or executing unauthorized commands. This exploit could lead to data breaches, intellectual property theft, and even sabotage of critical infrastructure.

How the Exploit Works

1. Input Manipulation: Attackers craft specially designed prompts that confuse the AI model, causing it to generate unintended outputs.

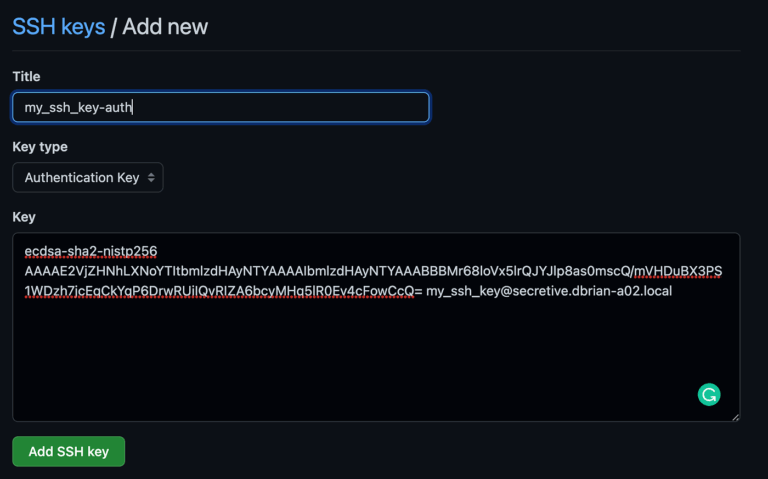

2. Data Exfiltration: Once the AI is compromised, attackers can extract sensitive information, such as source code, API keys, or user credentials.

3. Command Execution: In some cases, the vulnerability allows attackers to execute arbitrary commands within the build environment, leading to further system compromise.

Real-World Examples

The PromptPwnd vulnerability has already been observed in several high-profile incidents, including:

– A financial services firm where attackers used the flaw to steal proprietary trading algorithms.

– A tech startup that suffered a data breach due to compromised build logs containing sensitive customer data.

The Impact of PromptPwnd on AI Build Systems

The consequences of the PromptPwnd vulnerability extend beyond immediate data theft. Organizations that rely on AI-driven build systems face long-term reputational damage, financial losses, and regulatory penalties. Additionally, the exploitation of this flaw could undermine trust in AI-powered development tools, potentially slowing adoption.

Financial Implications

– Direct costs: Organizations may incur expenses related to incident response, legal fees, and regulatory fines.

– Indirect costs: Lost business opportunities, diminished customer trust, and reduced market share.

Security Implications

– Increased attack surface: The vulnerability exposes build systems to a wider range of cyber threats.

– Supply chain risks: Compromised build systems could inadvertently introduce malware into downstream applications.

Mitigation Strategies for Organizations

To address the PromptPwnd vulnerability, organizations must implement a multi-layered security approach. The following strategies can help mitigate the risk:

1. Regular Security Audits: Conduct penetration testing and vulnerability assessments to identify weaknesses in AI models.

2. Input Validation: Implement strict input validation to prevent malicious prompts from reaching AI systems.

3. Access Controls: Enforce role-based access control (RBAC) to limit who can interact with build systems.

4. Security Patching: Ensure that AI models and build tools are regularly updated with the latest security patches.

5. Employee Training: Educate developers and IT staff on the risks of AI-related vulnerabilities and best practices for secure development.

The Future of AI Build System Security

As AI continues to evolve, so too will the threat landscape. The PromptPwnd vulnerability serves as a wake-up call for organizations to prioritize security in their AI-driven development processes. By adopting proactive measures, companies can build resilient build systems that are better equipped to withstand emerging threats.

Conclusion

The PromptPwnd vulnerability highlights the critical need for enhanced security measures in AI-driven build systems. As organizations increasingly rely on automation and machine learning, they must remain vigilant against evolving cyber threats. By implementing robust security practices, companies can safeguard their data and maintain trust with customers and stakeholders.

Frequently Asked Questions (FAQs)

What is the PromptPwnd vulnerability?

The PromptPwnd vulnerability is a critical flaw in AI-driven build systems that allows attackers to manipulate AI models, leading to data theft and unauthorized command execution.

How can organizations protect themselves from this vulnerability?

Organizations can mitigate the risk by conducting regular security audits, implementing input validation, enforcing access controls, applying security patches, and providing employee training.

What are the potential consequences of a PromptPwnd attack?

The consequences include data breaches, financial losses, reputational damage, and supply chain risks, which can undermine trust in AI-powered development tools.

Is the PromptPwnd vulnerability limited to specific industries?

No, the vulnerability affects any organization using AI-driven build systems, including financial services, tech startups, and critical infrastructure providers.

How can developers identify if their build system is vulnerable?

Developers should conduct penetration testing and vulnerability assessments to determine if their AI models and build tools are susceptible to the PromptPwnd exploit.

Leave a Comment