Microsoft 365 Outage Disrupts Teams, Outlook, and Copilot in Japan…

In the legacy of disruptive cloud events, one morning in the Asia-Pacific region reminded executives and frontline workers alike that digital tools demand reliability. This LegacyWire report centers on a Microsoft 365 outage that knocked Teams, Outlook, OneDrive, and Copilot offline for thousands of users across Japan and China. The outage stemmed from a critical routing issue in Microsoft’s infrastructure, ripple effects reverberating through enterprises, government offices, and small businesses that rely on real-time collaboration and cloud storage. In the first paragraph of this article, we lay out the core facts, then we unpack what happened, why it happened, and how organizations can strengthen their resilience against similar incidents in the future.

What happened and what didn’t

The incident began on Thursday morning local time when a routing anomaly disrupted the normal flow of traffic to Microsoft 365 services. In practical terms, that meant Teams, Outlook, OneDrive, and the Copilot AI assistant were largely unavailable or functioning with significant latency for a broad swath of users in Japan and China, extending to some nearby markets in the Asia-Pacific region. While the exact timing varied by service and locale, the effect was immediate for daily workflows: teams could not coordinate in real time, emails piled up, shared documents remained inaccessible, and AI-driven copilots could not fetch context or generate content.

Why the routing issue matters

Routing is the digital equivalent of a busy highway system. When a primary route becomes congested or broken, packets—small chunks of data—must be rerouted through alternate paths. The incident underscored two practical truths about cloud-based productivity suites: first, the reliability of modern collaboration hinges not just on server uptime, but on the precision and resilience of network routing. Second, even a single misconfiguration or transient fault at an edge or regional hub can cascade into broad user impact. In this case, the routing issue intersected with a layered service stack—Teams for chat and calls, Outlook for messaging and calendars, OneDrive for file storage, and Copilot for AI-assisted productivity—amplifying the disruption across multiple critical workflows.

Geographic scope and user impact

The outage’s epicenter lay in Japan and China, with widespread consequences across the broader APAC region. Businesses ranging from manufacturing plants to financial services offices grappled with the interruption. For some, the disruption stalled essential communications between headquarters and regional teams, complicating decisions that depended on near-instant access to shared documents and calendars. For others, the suspense around whether a meeting could proceed or a client email would be delivered led to a temporary pause in decision-making, a rare but real productivity drag that organizations usually weather only in rare outage events.

Industry-wide effects

- Corporate operations: Project collaboration slowed as Teams messages lagged or failed to sync, and file sharing stalled on OneDrive.

- Customer-facing activities: Email and calendar access affected scheduling, customer communications, and SLA adherence in customer support and sales teams.

- AI-assisted workflows: Copilot functionality, including document drafting and data analysis prompts, was unavailable or unreliable, reducing efficiency for knowledge workers relying on AI copilots.

- Business continuity: Organizations dependent on cloud-first architectures had to activate offline contingencies or temporary manual processes.

Root cause, incident management, and the restoration path

From the outset, Microsoft’s incident reports pointed to a routing fault within the broader cloud network fabric that powers Microsoft 365. Experts framing the incident described a disruption that propagated through regional routing nodes and edge caches, briefly altering how traffic was steered toward the global service backbone. In practice, this meant some users saw timeouts, while others experienced partial content delivery or stale sessions. The company described the issue as a “critical routing error” that necessitated urgent rerouting and traffic stabilization efforts across multiple data centers and network layers.

What Microsoft did—and why

In response, Microsoft prioritized rapid containment and service restoration. Engineers conducted traffic re-mapping, engaged alternate routing paths, and implemented temporary mitigations to reduce the risk of cascading failures. The process required close collaboration with network partners, global service teams, and regional engineers to ensure that once traffic was redirected, services could be stabilized without reintroducing latency or further disruptions. The restoration path involved graduated recovery: some services came back online in minutes, others required more time as caches were flushed, sessions re-authenticated, and clients reconnected to the reinstated paths.

Restoration timeline and current status

By mid-morning local time, a considerable portion of affected users regained access to Teams, Outlook, and OneDrive, with Copilot resuming functionality once its service mesh stabilized. Microsoft’s status dashboards and partner communications indicated a phased recovery, reflecting the complexity of rehydrating a multi-service cloud stack across distinct regions. Even as the primary services ticked upward toward normal operation, some residual latency or occasional degraded experiences persisted for a subset of users, particularly those with cached credentials or long-running sessions. In practical terms, most enterprises reported a return to near-normal service within a few hours, though careful monitoring remained essential as traffic patterns normalized and client devices re-established secure connections.

Lessons learned during recovery

Several culture-shifting lessons emerged from the restoration process. First, a real-time incident response mindset matters more than ever in cloud-first environments. Second, transparent communication—through status pages, internal dashboards, and proactive guidance—helped reduce user frustration and set realistic expectations. Third, the event highlighted the operational value of a robust fallback plan that includes offline channels and parallel workflows so critical operations don’t grind to a halt when cloud services wobble.

Business implications and resilience strategies

For many organizations, outages like this are not merely IT incidents; they are business continuity tests. The impact goes beyond lost emails or delayed meetings. Vendors and partners may need to adjust service-level expectations, while internal risk assessments may prompt a renewed focus on non-cloud redundancies. The event serves as a reminder that resilience is a multi-layered concept, encompassing people, processes, and technology. The following sections outline practical steps leaders can take to harden their operations against similar disruptions in the future.

Operational risk management in a cloud-driven era

- Redundancy and diversification: Avoid single points of failure by distributing critical workloads across multiple clouds or regions where feasible, while balancing cost and performance trade-offs.

- Offlining strategies: For mission-critical communications, maintain offline or alternate channels (e.g., local email archiving, secure messaging platforms) to sustain momentum during outages.

- Business continuity playbooks: Update playbooks to include explicit steps for outages affecting collaboration tools, including decision rights, escalation paths, and client notification templates.

- Data portability and access controls: Ensure employees can access essential documents through multiple routes and devices, reducing dependence on a single service for core tasks.

- Incident response rehearsals: Run regular drills that simulate cloud service disruptions, focusing on cross-functional coordination and clear communication.

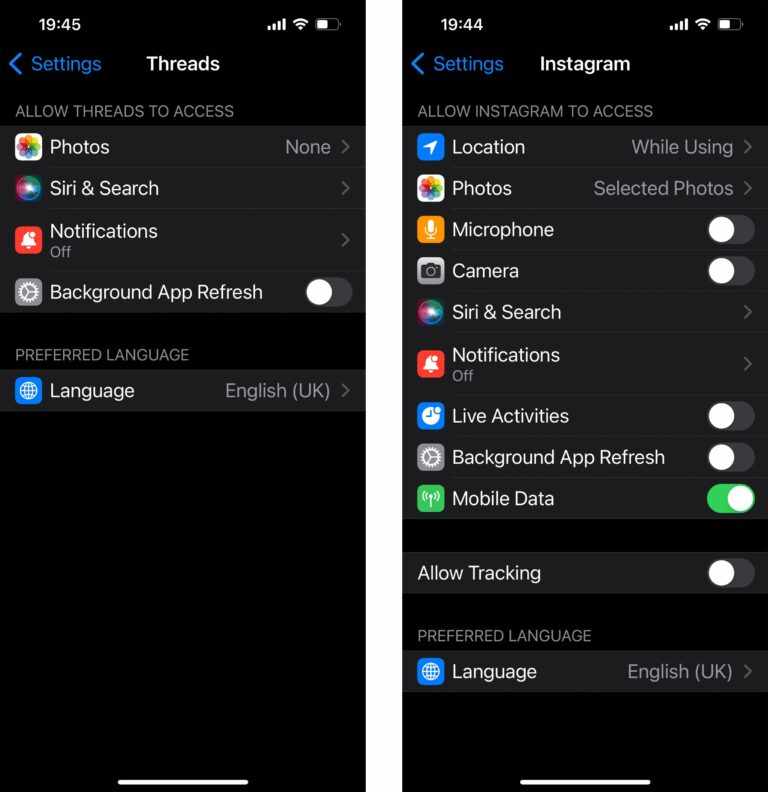

Copilot and AI in outage scenarios

The outage affected Copilot, Microsoft’s AI-assisted productivity companion, a tool that many teams rely on for drafting documents, summarizing data, and generating insights. In the absence of Copilot, teams returned to traditional workflows—manual drafting, more extensive use of templates, and more time spent interpreting data without AI augmentation. This disruption highlighted several truths about AI features in the enterprise: automation remains powerful but fragile in outage conditions, and human-in-the-loop processes become even more valuable during platform downtime. Organizations should view AI capabilities as scalable enhancements rather than indispensable crutches, ensuring that human oversight and alternate methods remain in place for mission-critical tasks.

Security, compliance, and governance during outages

Security and compliance considerations gain additional urgency when cloud services falter. Temporary inaccessibility can complicate identity verification, access controls, and data governance policies. Enterprises must balance visibility with risk management: while users may work offline, sensitive information must continue to be protected according to regulatory requirements. In the wake of an outage, IT and security teams should validate that authentication tokens, encryption keys, and access controls remain aligned with policy, even as services come back online. Recovery is not simply a technical reset—it is a governance exercise that ensures data integrity, audit trails, and incident reports accurately reflect the event and the steps taken to mitigate it.

What organizations can do now to prepare

Despite the rapid recovery from this incident, the episode offers a practical blueprint for organizations aiming to minimize future disruption. The goals are clear: improve resilience, minimize downtime, and maintain productive operations even when cloud services stumble. Here are actionable strategies that leaders can implement today:

- Inventory critical workflows: Map which teams rely on specific Microsoft 365 services in tandem, and identify alternative pathways for those workflows.

- Enhance network resilience: Evaluate routing architectures, edge caching strategies, and partner SLAs to understand exposure and recovery timelines during outages.

- Improve observability: Invest in comprehensive tracing, telemetry, and proactive anomaly detection to catch routing anomalies early and accelerate response.

- Strengthen vendor communication: Establish predefined escalation channels with cloud providers for real-time status updates and remediation plans.

- Plan for scale: Ensure business continuity plans accommodate both small regional outages and larger, multi-service events that cross borders.

- Practice security hygiene: Maintain strong identity and access management, with contingency procedures for offline authentication and data access.

Industry context and statistical backdrop

Outages in large cloud ecosystems have drawn increased attention in recent years as reliance on remote collaboration and AI-enabled workflows grows. Analysts note that while cloud platforms deliver remarkable uptime, outages are not rare events and often reveal the fragility of complex, interconnected systems. The APAC region, with its diverse mix of enterprise sizes and regulatory environments, sometimes experiences different outage patterns than other markets due to regional routing configurations and cross-border data flows. This incident, spanning Japan and China, underscores the need for organizations to craft resilience strategies tailored to regional realities, including workforce distribution, partner ecosystems, and local data governance requirements. The takeaway for leaders is not fear of cloud dependency, but a disciplined approach to redundancy, recovery, and rapid adaptation when real-time services falter.

Demystifying the user experience during outages

From a user’s perspective, outages disrupt the rhythm of work. A project team may stall while waiting for a document link to load, a sales rep cannot confirm a client meeting, and a manager cannot rely on a shared calendar to coordinate a cross-functional launch. The human impact is real: morale can dip when productivity tools are suddenly unreliable, even if the underlying infrastructure remains robust for most of the time. The human-centric takeaway is to translate technical outages into clear, actionable guidance for employees—how to stay productive, whom to contact for critical tasks, and when to switch to alternative channels until services recover. In practice, this means predefined offline steps, quick-reference communication templates, and a culture that respects contingency modes rather than defaulting to emergency improvisation.

Conclusion: turning disruption into a catalyst for stronger resilience

The Microsoft 365 outage in Japan and the APAC region serves as a timely reminder that even the most sophisticated cloud ecosystems can experience disruptive events. While the immediate impact was manageable for many organizations, the longer-term implications center on resilience, governance, and the ability to maintain business continuity during service interruptions. For LegacyWire readers, the lesson is clear: invest in robust incident response frameworks, diversify and harden routing strategies, and ensure that people, processes, and technology are aligned to weather similar storms in the future. By combining practical containment measures with a forward-looking resilience mindset, enterprises can reduce downtime, preserve productivity, and emerge stronger from outages rather than simply recovering from them.

FAQ

- What caused the outage? The outage stemmed from a critical routing issue within Microsoft’s cloud network infrastructure, which disrupted traffic routing to core 365 services such as Teams, Outlook, OneDrive, and Copilot. The root cause involved regional routing nodes and edge services, requiring rapid rerouting and stabilization by engineering teams.

- Which services were affected? Teams, Outlook, OneDrive, and Copilot experienced outages or degraded performance. Depending on the user’s location and connection, some services recovered faster than others, with Copilot being the slowest to fully restore in some cases due to its tighter integration into AI-driven workflows.

- How long did restoration take? Restorations varied by service and location but generally progressed over several hours, with most users regaining full access by mid-morning in local time. Some residual performance fluctuations could continue briefly as traffic normalized.

- Should organizations expect recurring outages? Cloud service reliability has improved dramatically over the years, but outages are possible. The prudent approach emphasizes resilience, redundancy, and comprehensive incident response planning to minimize business impact when disruptions occur.

- What can be done to prepare for similar incidents? Establish cross-functional incident playbooks, diversify routing and data access options, strengthen offline workflows, and maintain clear communication channels with employees and partners. Regular drills help ensure teams know precisely what to do when services falter.

- Is Copilot safe to rely on during outages? Copilot is an incredibly useful tool, but it depends on live cloud services. In outages, reliance should shift temporarily to traditional workflows while AI features are offline. This underscores the importance of human-in-the-loop processes and fallbacks for critical tasks.

- What about security and compliance during an outage? Security remains essential even when services are down. Access controls, identity verification, and data governance policies should be maintained, and organizations should verify that recovery preserves data integrity and auditability.

- What steps should leaders take after an outage? Conduct a post-incident review, update incident response plans, reinforce business continuity measures, and practice resilience through drills that simulate similar scenarios across regions and services.

As this incident fades into the broader history of cloud reliability challenges, LegacyWire will continue to monitor the evolution of service-level practices, regional resilience strategies, and the ongoing balance between cloud convenience and enterprise control. The takeaway is not doom for cloud-based collaboration; it’s a renewed commitment to building systems that stay functional when a single component falters, ensuring that organizations remain productive, informed, and agile in the face of unexpected outages.

Leave a Comment