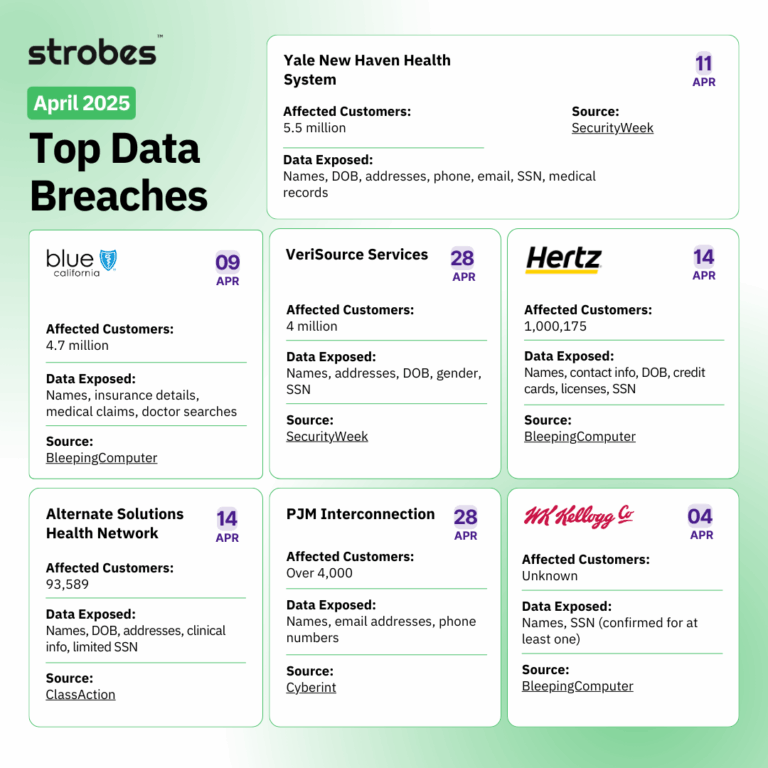

Kernel upgrades can pose real risks to Linux servers when they’re not carefully tested or planned. The recent CrowdStrike outage in July 2024 showed how a rushed or faulty kernel update can trigger system crashes, disrupt airport operations and banking networks, and leave security teams scrambling for recovery. With uptime, security, and reliability on the line, every upgrade exposes organizations to the chance of downtime, compatibility issues, or even serious breaches.

Tech, AI, and cybersecurity professionals depend on stable infrastructure to support nonstop digital services. Each step in preparing for a kernel upgrade—tool selection, testing, and security reviews—directly affects trust and service delivery. This guide gives you the process needed to upgrade kernels with confidence and keep your systems protected against the next wave of threats.

Assessing Your Linux Environment Before an Upgrade

Planning a kernel upgrade starts with knowing what you have. Skipping this step can put stability and security at risk. Before rolling out any changes, take stock of your server, the services running, and any compatibility issues that could surface. This way, you spot weak spots and dependencies early instead of facing surprises after an update.

Inventory Your Current System and Kernel

Start with a full inventory. Make sure you know the Linux distribution, kernel version, and hardware specs for each server. List out critical workloads and note any custom kernel modules or drivers.

- Check current kernel version with

uname -r. - List running services and their dependencies.

- Capture installed packages using your package manager (for example,

rpm -qaordpkg -l).

Having all this data collected lets you track what’s changed and roll back if problems appear. If you’re using Red Hat-based distributions, reviewing the official Red Hat kernel upgrade process gives you a detailed look at package lists and pre-upgrade checks.

Identify Critical Applications and Downtime Tolerance

Assess the impact of downtime for each application or service. Some business workloads need constant uptime, while others have regular maintenance windows.

- Group applications by downtime sensitivity.

- Confirm any redundancy or failover measures are active.

- Validate that monitoring and alerting cover your most critical assets.

This analysis lets you plan your upgrade around the least disruptive windows and set clear expectations. Services that can’t afford interruption may require more careful planning or live patching tools.

Check Hardware and Compatibility Constraints

Compatibility issues between new kernels and aging hardware or drivers often create post-upgrade headaches. Investigate:

- Known hardware incompatibility with newer kernels.

- Custom kernel drivers or vendor modules.

- Unique firmware requirements.

Make a table for quick reference:

| Server Name | Hardware Model | Custom Drivers | Firmware Needs |

|---|---|---|---|

| ServerA | Dell R730 | Yes | Outdated NIC |

| ServerB | HP ProLiant | No | Current BIOS |

| … | … | … | … |

This helps prioritize upgrade efforts and spot which systems may need extra care.

Review Current Patch Management and Backup Procedures

Kernel upgrades can occasionally go sideways. It’s smart to make sure your patch management and backups are up to date before you move forward.

- Confirm recent, testable backups are available for every server.

- Check that configuration files and custom scripts are also copied.

- Audit your patch management history to avoid missed dependencies.

If you need to schedule a safe reboot after an upgrade or want to understand reboot best practices, resources like Do I need to restart server after a Linux kernel update? provide guidance.

Taking the time to review your servers, dependencies, and backup plans builds a solid foundation for the next steps in any Linux kernel upgrade.

Essential Tools for Kernel Upgrade Preparation

Every successful Linux kernel upgrade relies on strong preparation. Choosing the right tools can make or break maintenance cycles, especially when aiming for zero interruptions. With production workloads on the line, picking technologies that support quick updates and limit reboots means higher confidence and fewer outages. Among the most effective solutions available today are live patching technologies, which let you address security issues and bugs without stopping services.

Live Patching Technologies

Traditional Linux kernel upgrades need a full system reboot. For many workloads, this is a showstopper. Live patching steps in as a better option, letting you update the kernel while the server stays online. Here are some popular live patching solutions:

- Ksplice: Oracle’s Ksplice allows admins to apply security patches to supported Linux distributions in real-time. This keeps services online even during critical patch cycles, reducing urgent reboot windows.

- KernelCare (TuxCare): KernelCare automates kernel security updates, applying patches without rebooting. It’s widely used in enterprise Linux environments where uptime is critical. KernelCare can also handle updates for libraries like OpenSSL and Glibc, which adds another layer of risk mitigation.

- kpatch: Developed by Red Hat, kpatch is an open-source tool built for live patching Red Hat-based systems. System admins can deliver important fixes without scheduling outages that could affect users or business services.

Live patching is far from just a convenience; it prevents unplanned downtime, protects workloads, and keeps businesses running even during emergency fixes. If you want to dive deeper into how live kernel updates work and see vendor-specific guidance, Red Hat’s live patching resource is a solid starting point.

When evaluating live patching, balance your security requirements with operational needs such as high availability, compliance, and patch management. Proper setup ensures you can respond quickly to new threats without pausing critical services. Live patching helps reduce downtime and can transform your server maintenance cycles from disruptive to nearly invisible.

Testing Kernel Upgrades Safely

Testing kernel upgrades is an important step that can make the difference between a smooth rollout and a service outage. Skipping proper tests invites unknown compatibility issues, hardware mismatches, driver failures, or even critical security gaps. With business uptime and data on the line, systematic testing makes upgrades predictable and repeatable.

Build a Kernel Testing Plan

A testing plan brings order to the potential chaos of kernel changes. List your test cases, environments, and rollback procedures so that every scenario gets attention. This usually involves:

- Creating a dedicated staging environment that mirrors production

- Scheduling upgrade simulations during off-peak times to monitor real-world effects

- Documenting every test, from driver compatibility checks to performance benchmarks

You should identify what “normal” looks like for each system: resource usage, logs, and user-facing behavior. Only then can you spot outliers after an upgrade.

Use Staging and Cloned Lab Environments

Running upgrades directly on a live server is high risk. Instead, use a staging or test lab that matches your real server configurations as closely as possible. Build these environments using the same Linux distribution, hardware (virtual or physical), and application stack as production.

Benefits of this approach include:

- Catching incompatibility issues early

- Verifying that custom drivers or third-party modules still work

- Observing application behavior after new kernel introduction

This hands-on testing method helps you predict and contain failures before they reach any service or user.

Validate Application and Hardware Compatibility

Most outages from kernel upgrades come from unknown dependencies or incompatible drivers. Start by:

- Checking log files for unexpected errors or warnings

- Running automated service health checks

- Testing applications under expected user loads to confirm stability

If your organization uses custom kernel modules or device drivers, validate these against the new kernel version. Not all third-party software keeps up with upstream kernel changes, so ensure they’re updated or replaced as needed.

For an in-depth walkthrough on patch testing and risk reduction, see the Best Practices for Managing Linux Kernel Patches and Updates.

Rollback and Recovery Tests

No test plan is complete without proving you can roll back safely. Practice restoring your backup images, reinstalling the old kernel, and recovering configuration files. Measure how long these steps take and confirm each restores the server to a known good state.

A solid rollback process provides peace of mind, especially if you uncover late-breaking issues after live deployment. Comprehensive backup strategies are a foundation here; learn more about what a robust process includes in this overview of secure Linux kernel update practices.

Monitor and Document Everything

The best way to spot kernel-related issues quickly is with tight monitoring and detailed record keeping. During and after your test upgrades:

- Monitor system health metrics (CPU, memory, disk I/O)

- Log any error messages or performance dips

- Track how long services take to restart

- Document changes in behavior or configuration

Having detailed reports from your tests guides your main upgrade and provides a baseline for post-upgrade troubleshooting. Over time, your documentation becomes an internal knowledge base, speeding up future server maintenance cycles.

Real-world experience shows that teams who test thoroughly rarely struggle with unexpected downtime after kernel changes. Make your process routine and clear so upgrades become low-stress, predictable projects—not risky leaps.

Security Checks Before and After Kernel Upgrades

Security does not end once the new Linux kernel is running. Each upgrade, no matter how small, demands a careful review before and after going live. Preparing your servers for quick rollback, and having reliable recovery plans, ensures you are ready for the unexpected. Stopping at a quick health check or a “worked in testing” assumption is risky—real safeguards mean real preparation.

Rollback and Recovery Procedures

Kernel upgrades are not always smooth. A single misstep in version compatibility, hardware support, or configuration can bring down production systems. This is why robust rollback and recovery strategies are essential.

Set up the bootloader to support rollback options. Most modern Linux distributions use GRUB 2, which keeps entries for previous kernels. Confirm these options are present by inspecting your /boot/grub2/grub.cfg or /boot/grub/grub.cfg file on each system. You want to see more than just the latest kernel in the boot menu. If your environment uses UEFI, check that the boot manager includes all recent kernels, not just the newest version.

Testing rollback is as important as testing the upgrade itself. Schedule simulated failures—intentionally boot into an older kernel, verify network and service functionality, and measure how long your servers take to recover. If time is tight, prioritize business-critical systems for this hands-on testing. Performing a cold reboot with an older kernel and checking that all key workloads come back proves your systems are ready for anything, not just best-case scenarios.

Backups must be current, complete, and tested. Store not only files and databases but also OS images and configuration states. Modern backup solutions let you restore entire servers or snapshots in short order. Document every recovery process step—from retrieving backups to restoring full functionality. Store recovery guides with your ops team and practice mock recoveries when possible. Keeping step-by-step documentation reduces stress and confusion when speed matters most.

For a stronger baseline, follow this basic checklist before and after kernel upgrades:

- Confirm previous kernels are listed and bootable in the bootloader.

- Practice recovery using full-system and configuration backups.

- Validate your infrastructure’s support for secure boot and fast fallback.

- Maintain updated documentation for all rollback and restore steps.

Building repeatable rollback and recovery paths means you are not just hoping your upgrades work. You are ready, armed with working options when failures happen. Sound rollback and recovery procedures stand as your safety net—making kernel upgrades a controlled risk, not a blind leap.

For readers interested in wider disaster recovery planning for Linux, Red Hat’s guide to system recovery procedures offers additional detail on best practices.

Industry Best Practices and Emerging Trends

Kernel upgrades are no longer a routine task left to background maintenance. They now sit at the intersection of uptime, cybersecurity, and compliance. Organizations that rise above the basics focus on structured step-by-step upgrades, active monitoring, and building repeatable processes that reduce risk. Paying attention to both lessons learned from seasoned sysadmins and new tools from the Linux ecosystem helps teams avoid pitfalls and gain more confidence with every upgrade.

Systematic Approach to Upgrade Scheduling

A thoughtful upgrade schedule minimizes risk. Leading organizations keep a rolling calendar for kernel and security updates. This schedule matches maintenance windows with critical workloads. Small batches are handled first, with non-production environments leading the rollout. By using a phased approach, teams catch unknown issues before they hit essential systems.

- Use change management tools (like Ansible or Puppet) to standardize upgrades.

- Automate testing and deployment to cut down on manual errors.

- Keep patch windows short and avoid weekends or high-traffic business hours.

Sticking to a tested plan transforms upgrades from scramble mode to routine operation.

Automation and Continuous Integration

Automating kernel patching through configuration management and CI/CD pipelines reduces human error and increases traceability. Automation enables pre-checks, seamless rollouts, and clear rollback paths.

Key benefits of integrating automation include:

- Repeatable, audited patch routines for compliance needs.

- Easy rollback scripts for failed patches.

- Integration with monitoring tools for real-time health checks.

Adopting this model lets teams spot failures early and respond fast, before regular users even notice.

Integrity and Security with Advanced Checks

Before and after every kernel change, best-in-class teams check system integrity using tools like Tripwire or AIDE. Scheduled scans catch unauthorized alterations, while security baselines confirm configurations have not slipped.

- Review logs using tools like logwatch or the ELK stack.

- Scan for vulnerabilities with openVAS or Nessus.

- Apply security benchmarks (CIS or DISA STIG) as part of the process.

Security is not a checkbox—it is an ongoing cycle, tightened before and after every major update.

Adapting to Zero Trust and Microsegmentation

A shift is happening across the industry: more teams adopt zero trust principles and network microsegmentation with each kernel iteration. Instead of assuming trusted connections, every device and node is checked, even after routine kernel upgrades. This strategy:

- Limits the blast radius of compromise if a patch fails.

- Requires regular reconfirmation of network and application policies after upgrades.

- Pairs well with immutable infrastructure practices.

This level of care is moving from theory to practice within most high-security organizations.

Keeping Up with New Kernel Features and Vendor Capabilities

Emerging Linux kernel releases increasingly focus on security, automation, and cloud-readiness. Features like live patching, eBPF support, and real-time monitoring improve system resilience. Following mailing lists, kernel changelogs, and trusted online resources such as Kernel Newbies keeps teams prepared.

For detailed insight, refer to the latest summary of Linux kernel advancements and enterprise impact.

Building an Internal Knowledge Base

Experienced sysadmins document every upgrade path, issue encountered, and lesson learned. These internal wikis, how-to guides, and runbooks turn real-world patches into repeatable recipes for the next cycle.

Regular knowledge sharing sessions—whether brown-bag meetings, Slack updates, or email threads—help bring junior staff up to speed and capture “tribal knowledge” before it disappears. Over time, this foundation builds organizational resilience and prevents costly mistakes.

Staying current by mixing established procedures with new trends makes every kernel upgrade safer and more predictable. As more teams blend automation, robust documentation, and rigorous security checks, the process gains both speed and confidence. With technology moving quickly, it pays to watch these trends, borrow best practices, and improve with every cycle.

Conclusion

Every step taken to prepare Linux servers for kernel upgrades—reviewing system inventories, selecting proven tools, running full-scale tests, and keeping up with security checks—helps avoid downtime and strengthens resilience against threats. Careful planning and reliable documentation protect critical workloads when new kernel releases arrive.

Now is the time to review your own upgrade procedures, close any gaps in testing or rollback, and update practices based on recent outages and innovations. Regular review and precise recordkeeping make each upgrade safer, quicker, and easier to support. Thank you for reading; your input is welcome as the industry moves toward tighter, more reliable server maintenance.

Leave a Comment